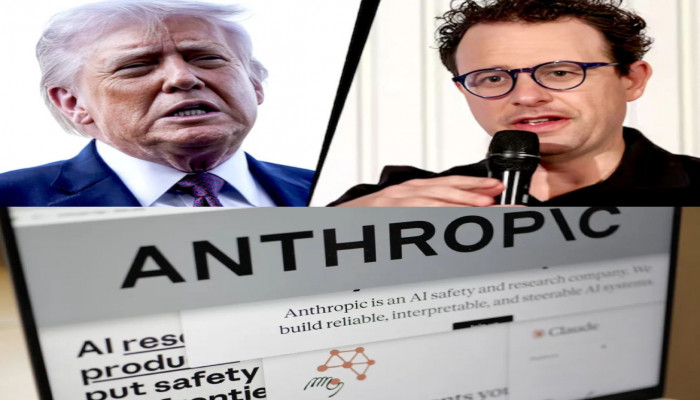

U.S. government bans Anthropic AI over dispute on military use

- In Reports

- 05:06 PM, Feb 28, 2026

- Myind Staff

The United States government, led by President Donald Trump, took the unusual step on Friday of ordering all federal agencies to stop using the artificial intelligence technology developed by Anthropic, escalating a very public clash between the administration and the AI company. The move came after a long dispute over the conditions under which the U.S. military could use Anthropic’s AI system, known as Claude.

Government officials, including Trump and Defence Secretary Pete Hegseth, publicly criticised Anthropic for not agreeing to let the military use its AI tools without any restrictions, saying this put national security at risk. Trump took to social media and declared, “We don’t need it, we don’t want it, and will not do business with them again!” This comment came just about an hour before the Pentagon’s deadline for Anthropic to grant full military access, putting strong pressure on the company.

The dispute began as talks between the Pentagon and Anthropic about how the AI could be used by the U.S. military. The Pentagon wanted unrestricted access to the AI technology for all defence purposes, including potentially sensitive areas. Anthropic’s CEO, Dario Amodei, resisted those demands because he and the company were concerned about how the AI might be used, especially when it came to domestic surveillance or fully autonomous weapons, situations they believed were dangerous or unethical.

Anthropic said it wanted “narrow assurances from the Pentagon that its AI chatbot Claude would not be used for mass surveillance of Americans or in fully autonomous weapons,” but the Pentagon insisted its own legal safeguards were enough and still demanded unrestricted access.

When the deadline passed without an agreement, the administration acted swiftly. Besides ordering agencies to stop using Anthropic’s products, the Department of Defence labelled Anthropic a “supply chain risk,” a designation usually reserved for companies tied to foreign adversaries. This raised serious concerns in the tech and business world because such a label can disrupt partnerships and harm a company’s reputation. The move was striking because Anthropic is an American-based startup that grew rapidly from a small research lab in San Francisco to one of the world’s most valuable AI companies.

Anthropic responded to the government’s action with a statement saying the decision was “unprecedented and legally unsound” and something that had “never before been publicly applied to an American company.” The company also made clear it planned to challenge the supply chain risk designation in court. “No amount of intimidation or punishment from the Department of War will change our position on mass domestic surveillance or fully autonomous weapons,” Anthropic said, insisting it would fight any legal or regulatory measures it believed were unfair.

President Trump defended the government’s actions on his social media platform, asserting that Anthropic was wrong to try to limit how its technology could be used by the U.S. military. He said agencies must stop using the company’s tools immediately, but he allowed the Pentagon a six-month window to phase out Claude where it was already embedded.

In a message written in all capital letters, Trump also warned the company, “better get their act together, and be helpful” during the phase-out period or face “major civil and criminal consequences to follow.” Trump framed his position around a broader theme, writing that the United States “will never allow a radical left, woke company to dictate how our great military fights and wins wars!”

The situation drew mixed reactions from government officials. Pentagon spokesperson Sean Parnell claimed that Anthropic’s refusal to grant unrestricted access was “jeopardising critical military operations and potentially putting our warfighters at risk.” Meanwhile, Hegseth said the Pentagon must have full access to Anthropic’s models for every lawful defence purpose. Yet the designation of the company as a supply chain risk raised eyebrows among lawmakers.

Virginia Senator Mark Warner, the top Democrat on the Senate Intelligence Committee, warned that the combination of aggressive rhetoric and an unusual regulatory action “raises serious concerns about whether national security decisions are being driven by careful analysis or political considerations.”

The controversy quickly spread beyond government circles to tech communities in Silicon Valley, where many AI developers, investors, and engineers expressed support for Anthropic’s stance on safety constraints. Prominent voices from other companies, such as OpenAI and Google, questioned the government’s approach and its potential impact on innovation and ethical use of AI.

In fact, OpenAI CEO Sam Altman publicly backed Anthropic on social media and in internal communications, stating that his company shared similar safety concerns and criticising the Pentagon’s “threatening” tactics. Altman said he generally trusted Anthropic’s intentions, even if his own company competed with it in other areas.

The public dispute also drew commentary from military veterans and AI experts. Retired Air Force General Jack Shanahan, a former leader of the Pentagon’s AI efforts, argued that the intense focus on Anthropic might be shortsighted. He pointed out that Claude was already widely used across government, including in classified settings, and that Anthropic’s conditions were “reasonable.” Shanahan also warned that systems like Claude, Grok, and ChatGPT were not yet fully ready for critical national security tasks, especially those requiring autonomous decision-making.

The fallout from this confrontation could reshape how the government and private AI firms work together in the future. While the administration’s actions may favour competitors like Elon Musk’s Grok—which the Pentagon plans to allow on classified networks—the broader implications for trust, innovation, and safety in AI deployment remain unresolved.

Comments